|

Use -U My-browser to tell the site you are using some commonly accepted browser: Wget has a very handy -U option for sites that don't like wget. The trick that fools sites and webservers blocking Wget by User-Agent The command-line option -e robots=off will tell wget to ignore the robots.txt file. wget will respect robots.txt even if you override the user-agent. Wget will respect a listing in a robots.txt file which tells wget to not download parts of a website or anything at all if that is what the robots.txt file asks. Many sites have a robots.txt file which includes wget.

We do not support use of such download managers as flashget, go!zilla, or getright The trick to ignoring sites blacklisting wget in robots.txt Sorry, but the download manager you are using to view this site is not supported. Many sites refuses you to connect or sends a blank page if they detect you are not using a web-browser. To prevent this they typically check how browsers identify. The power of wget is that you may download sites recursive, meaning you also get all pages (and images and other data) linked on the front page:īut many sites do not want you to download their entire site. Wget -O images/anime-girls-with-questionmarks/cute-blond-girl.jpg Downloading recursively

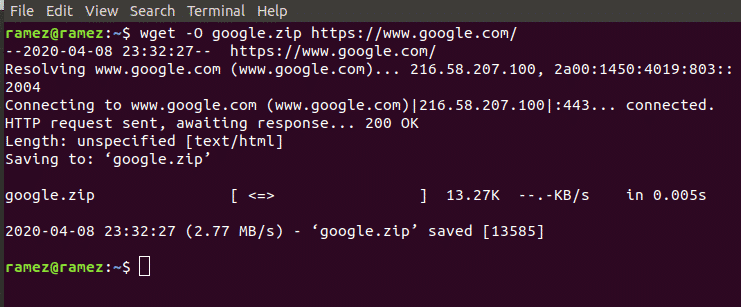

Thus you could ask wget to name the saved file something useful, Let's say you want to download an image named 2039840982439.jpg. WGet's -O option for specifying output file is one you will use a lot.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed